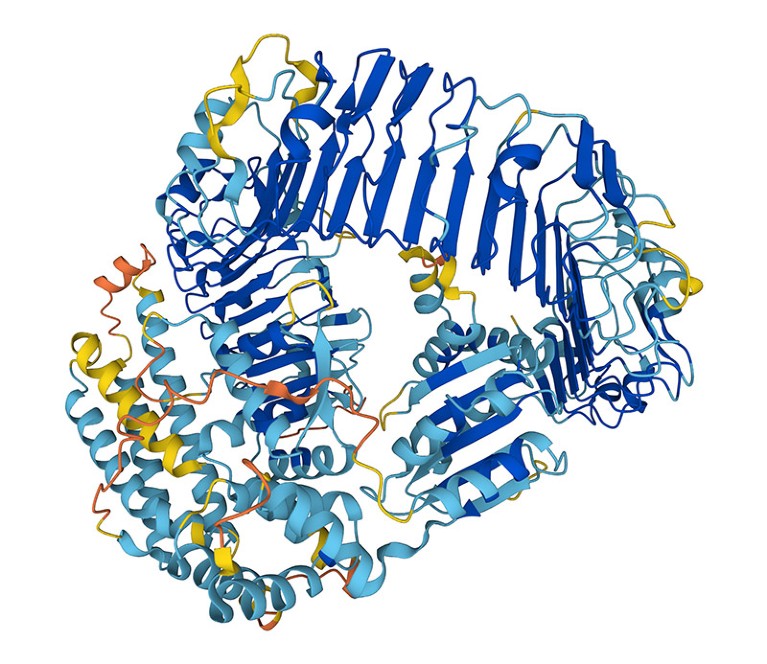

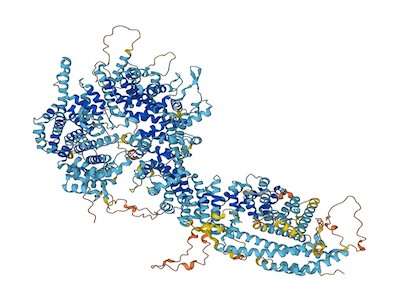

The artificial-intelligence tool AlphaFold can design proteins to perform specific functions.Credit: Google DeepMind/EMBL-EBI (CC-BY-4.0)

Could proteins designed by artificial intelligence (AI) ever be used as bioweapons? In the hope of heading off this possibility — as well as the prospect of burdensome government regulation — researchers today launched an initiative calling for the safe and ethical use of protein design.

“The potential benefits of protein design [AI] far exceed the dangers at this point,” says David Baker, a computational biophysicist at the University of Washington in Seattle, who is part of the voluntary initiative. Dozens of other scientists applying AI to biological design have signed the initiative’s list of commitments.

AI tools are designing entirely new proteins that could transform medicine

“It’s a good start. I’ll be signing it,” says Mark Dybul, a global health policy specialist at Georgetown University in Washington DC who led a 2023 report on AI and biosecurity for the think tank Helena in Los Angeles, California. But he also thinks that “we need government action and rules, and not just voluntary guidance”.

The initiative comes on the heels of reports from US Congress, think tanks and other organizations exploring the possibility that AI tools — ranging from protein-structure prediction networks such as AlphaFold to large language models such as the one that powers ChatGPT — could make it easier to develop biological weapons, including new toxins or highly transmissible viruses.

Designer-protein dangers

Table of Contents

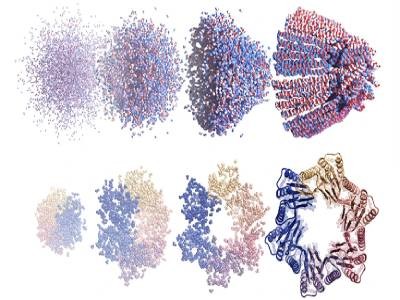

Researchers, including Baker and his colleagues, have been trying to design and make new proteins for decades. But their capacity to do so has exploded in recent years thanks to advances in AI. Endeavours that once took years or were impossible — such as designing a protein that binds to a specified molecule — can now be achieved in minutes. Most of the AI tools that scientists have developed to enable this are freely available.

To take stock of the potential for malevolent use of designer proteins, Baker’s Institute of Protein Design at the University of Washington hosted an AI safety summit in October 2023. “The question was: how, if in any way, should protein design be regulated and what, if any, are the dangers?” says Baker.

AlphaFold touted as next big thing for drug discovery — but is it?

The initiative that he and dozens of other scientists in the United States, Europe and Asia are rolling out today calls on the biodesign community to police itself. This includes regularly reviewing the capabilities of AI tools and monitoring research practices. Baker would like to see his field establish an expert committee to review software before it is made widely available and to recommend ‘guardrails’ if necessary.

The initiative also calls for improved screening of DNA synthesis, a key step in translating AI-designed proteins into actual molecules. Currently, many companies providing this service are signed up to an industry group, the International Gene Synthesis Consortium (IGSC), that requires them to screen orders to identify harmful molecules such as toxins or pathogens.

“The best way of defending against AI-generated threats is to have AI models that can detect those threats,” says James Diggans, head of biosecurity at Twist Bioscience, a DNA-synthesis company in South San Francisco, California, and chair of the IGSC.

Risk assessment

Governments are also grappling with the biosecurity risks posed by AI. In October 2023, US President Joe Biden signed an executive order calling for an assessment of such risks and raising the possibility of requiring DNA-synthesis screening for federally funded research.

Baker hopes that government regulation isn’t in the field’s future — he says it could limit the development of drugs, vaccines and materials that AI-designed proteins might yield. Diggans adds that it’s unclear how protein-design tools could be regulated, because of the rapid pace of development. “It’s hard to imagine regulation that would be appropriate one week and still be appropriate the next.”

But David Relman, a microbiologist at Stanford University in California, says that scientist-led efforts are not sufficient to ensure the safe use of AI. “Natural scientists alone cannot represent the interests of the larger public.”